Can Facial Emotion Coding & Speech Sentiment Analysis help with your Consumer Insights projects?

In the wake of the pandemic, many consumer-insight and market research projects have taken to using digital surveys, online focus groups, and online testing of advertising and promotional materials. And increasingly, experienced researchers are turning to cutting edge technology tools like emotion ai to add value to the insights from these projects.

This blog is an attempt to educate the general user as to the potential and uses of these technologies, across the market research and consumer insights segments.

So, how useful is Ai-powered Facial Coding or Speech Sentiment Analysis?

Reduces manual data handling…

Market research can be cumbersome and manually tedious. Collecting, collating, organizing and then analysing large volumes of user data is not a task for the faint-hearted. However, using new and emerging technologies like facial landmark or emotion coding and speech sentiment analysis, researchers can drastically reduce the manual workload by automatically aggregating granular emotion & engagement data inputs from hundreds or even thousands of of respondents or testers.

Focuses on the emotional aspect of buying behaviours!

Anyone who has spent any time in sales or marketing knows buying behaviour is never rational and that it is, in fact, a customer’s “emotional mind” that actually influences their buying decision. And while we rationally know that customers don’t buy products or services, they purchase feelings, it is still startling to note that emotion, not reason, drives 80% of consumer decisions!

So, to thrive as a marketer, you must appeal to a potential customers’ emotions. And today, there is no better or more accurate way to judge whether you have been successful than to use Emotion Ai in the form of facial coding, voice tonality analysis and text sentiments to understand and gauge user emotion and engagement during a remote or digital interaction with your product, service, content, brand, initiative, etc!

So, what is Facial Coding & Speech Sentiment Analysis & how does it even work?

To truly understand consumer emotions and enhance if not amplify the impact of their sales and marketing budgets, smart marketers globally are turning to Emotion AI technologies like Emotion Recognition, Facial Coding, Eye Tracking and Speech Sentiment Analysis.

The scope of this particular blog is to look at two of the main technologies in this emotion ai space – Facial coding and Speech sentiment analysis.

So, let’s start with a quick look at what Speech Sentiment Analysis means!

Understanding Ai-powered Speech Sentiment Analysis

Speech sentiment analysis first uses speech transcription to turn a user’s speech or the spoken word into text. It then deploys artificial intelligence (AI), natural language processing (NLP), and machine learning to automatically identify the flow of a user’s emotion while those words were spoken into sentiment categories like positive, negative, or neutral (ML). Specific keywords or phrases or also interpreted, within the context of the overall speech or text, as being positive or negative or even neutral.

The emotion AI algorithm then looks at the overall flow of user emotions and classifies a conversation or monologue as being positive or negative and highlights areas of distress or delight that businesses can consider while dealing with remote or digital interactions that their customers have with their products, services or brand!

Brands can use this technology in multiple settings, from analysing user or customer interactions on call / video or even employ sentiment analysis to sort through customer comments, reviews, social media posts, and survey findings to gain insights.

Understanding ML-powered Facial Coding…

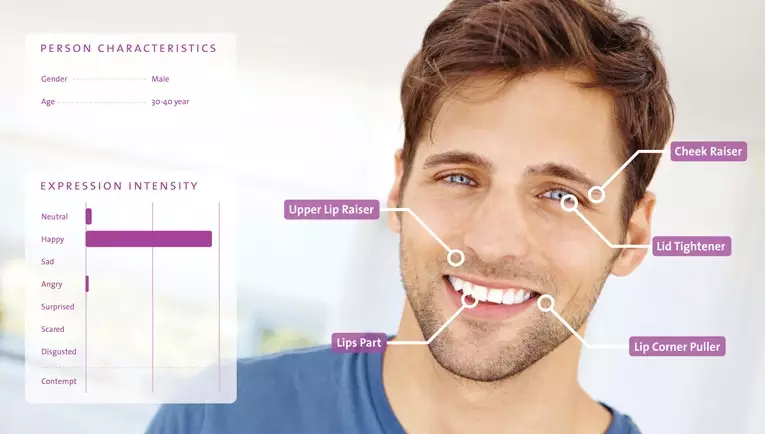

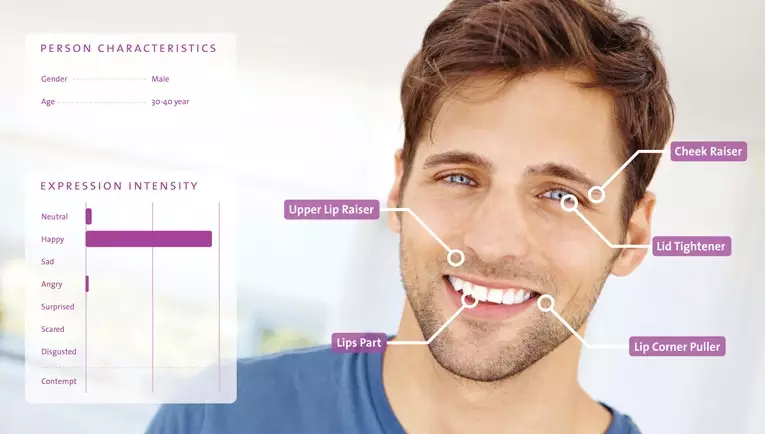

As the world moves to video, Facial Coding has truly come into it’s own as an Emotion Ai technology to be reckoned with! Facial coding is based on machine learning technology where extensive data sets are deployed to teach computers what human emotion on human faces looks like. Based on Paul Ekman’s 7 macro emotion theory, facial coding companies spend large amounts of time labelling or annotating human images with emotion labels.

The technology deployment is surprisingly similar, to how human learning happens. No child is born lwith the knowledge of what joy on a human face looks like or anger on its parent’s face looks like. It learns over a period of time, what a certain emotion looks like on a human face by seeing it and understanding its implications over hundreds of faces.

Machine learning works in quite the same way, where computers learn to understand via data labels what anger could look like on a variety of faces, or sadness. And then when they encounter a certain face during a digital interaction, they match it to their vast data sets and predict with a certain level of accuracy, as to what this emotion possibly might be.

IMPORTANT..

It is critical to understand that this technology is not a replacement to detect what people are thinking and is only helpful in a generic way to understand what emotions people might be displaying on their faces during a specific interaction.

So where can I use Facial Coding & Speech Sentiment Analysis?

In online learning…

With the sudden advent of the pandemic, everything that we previously used to do off-line is now online and that includes learning. The ed-tech segment has suddenly grown into a massive and exponentially increasing segment comprising the K12/school, higher education and corporate training segments.

On an ed-tech platform, a teacher is typically speaking with a large cohort of students all at once & as a presenter is obviously unable to understand or monitor each student’s experience, engagement levels or learning outcomes. This is where Facial Coding can be extremely useful as most online learning today is done on video conferencing platforms and the facial expressions of students can be concurrently monitored, analysed and any distress or negative emotion noting a lack of understanding or discomfort with the subject matter can be escalated to the teacher in real-time in the form of alerts & updates.

This may be especially helpful while teaching complicated or complex concepts, where facial coding can highlight & identify which students are having trouble understanding the content. This not only helps businesses to refine and enhance their teaching quality but also improve the quality of the pre-recorded content that they might be using to illustrate or explain complex topics of constructs.

IMPORTANT

It is critical of course to ensure that any such monitoring and analysis is done with complete transparency and an explicit opt-in by the student or by the guardian of the student and this remains a consistent responsibility of the business deploying this technology.

In Market Research or Consumer insights

In today’s cluttered digital media universe, there is a very high cost to be paid for bad content. Accordingly, it is critical today for brands to understand the effectiveness and engagement levels of the content and user experience that they associate with their brands as it costs a lot of money and time to create this content and user experience and these assets are not easily changeable.

This is where emotion AI for market research or consumers insights comes in.

Market Researchers can now use facial coding and speech sentiment analysis within their consumer insight projects to help brands understand emotional and engagement related responses to their content or user experiences.

In conclusion

It is a brave new online world out there with multiple new technologies to be learnt and experimented with. While every technology will not add value in every use case, it is important to stay abreast of the latest advances in the emotion AI space because at the end of the day all consumers are human and humans buy basis emotional responses, so to truly understand user behaviour, you cannot ignore human emotion.

About theightbulb.ai:

Theightbulb.ai is an emotion ai & engagement analytics platform that uses computer vision, speech transcription and audio analysis to generate real time emotion ai & engagement analytics for remote interactions. Thelightbulb’s emotion ai platform is VC-tool agnostic and browser-based & operates both via an integration-based model with APIs & SDKs & a stand-alone web application. Its qualitative research platform, Insights Pro Qual can analyze focus groups and interviews with more accuracy and speed.

With 4 patents in the pipeline and over 3.4 Mn faces scanned as in Aug-22, Thelightbulb’s face-detection, emotion-recognition & engagement mapping capabilities display high accuracy and compare favourably with industry giants. With a value-adding application layer for insights, Lightbulb provides real-time alerts & detailed emotion & engagement maps that take visual, audio & speech data into account for a holistic view of the user’s emotional state during a remote interaction.

Learn more about us at www.thelightbulb.ai

Or just click here to get a call back from our team: https://thelightbulb.ai/contact-us/